|

|

|

|

|

Group Of Texas Retail Providers Suggest Requiring That Power To Choose Site List Annual Estimated Average Price For REP Plans

The following story is brought free of charge to readers by EC Infosystems, the exclusive EDI provider of EnergyChoiceMatters.com

In comments to the Public Utility Commission of Texas in a new project reviewing the Power to Choose site, the Texas Energy Association For Marketers recommended that the site list the Annual Estimated Average Price for retail plans listed on the site

"TEAM members’ experiences, as well those of numerous consumers who have posted comments regarding the Website, suggest that the most difficult aspect of the website is to convey total average price in a manner so that the customers can readily compare the impact of different products on their bill. The current display of 'total average prices' at three usage levels comports with the Commission’s rule on electricity facts labels (EFLs). We do not support a modification that would require a rule change," TEAM said

"However, there would be no rule change necessary to also require REPs to provide the Annual Estimated Average Price by applying the individual product pricing to the Commission-approved residential load profile," TEAM said

"Addition and use of this column as the default sort mechanism would allow competitive forces to work -- and would eliminate the current incentives for REPs to design products that produce the lowest average price at [1000 kWh], but not the lowest price product based on a typical usage pattern of a customer with an annual average usage of 1000kWh," TEAM said

TEAM further noted that, "Various commentators have asked the Commission to require REPs to post 'flat' products that do not include fees, which would be both against free market principles and would prevent REPs from offering plans that pass through TDU charges. This would also prevent customers from being presented with plans which are designed to benefit specific preferences and usage patterns. It is important to avoid tendencies to skew the Website toward traditional product designs, rather than fairly and equally presenting innovative products such as those that are time-of-use based. Such products can lead to less demand during critical pricing/demand periods."

TEAM also addressed the issue of the listing of TDU fees

"Another aspect affecting customer experiences on the price comparison function of powertochoose.com is the timeliness by which REPs modify the posted prices to reflect changes in applicable TDU rates and/or other pass-through fees. Based on the number and frequency of TDU rate changes in any particular month, it is difficult for REPS to reliably track this information. It would be highly beneficial to customers if the TDUs could be asked to populate a standardized spreadsheet with their applicable rates for residential and small commercial customers. Despite the confusion and errant news reports, all prices on the website include prices from the EFL, and those prices are inclusive of all charges, including utility delivery charges. That said, there can be an issue related to the timeliness by which REPs modify the posted prices to reflect changes in applicable TDU rates. Utility rate information can be difficult for most REPs, especially smaller REPs, to monitor and incorporate into pricing," TEAM said

"This is because utilities often have changes to various factors affecting the energy charge, and at certain times will have a complete change due to a base rate case. The Commission has been very responsive to REP requests to limit utility charge changes to March and September, and provides a helpful update of all charges in March and September. However, there remain several changes throughout the year to the utility recurring charge that a REP must monitor in order to maintain current information for its products. In addition to base rate changes, REPs must monitor for transition charge updates, rate case expense recovery factors, nuclear decommissioning factors, tax rate changes, energy efficiency cost recovery, etc. These can occur throughout the year and are not limited to March and September," TEAM said

"The Commission could facilitate understanding of utility charges by the public with more education about these charges, including the fact that REPs must include these costs, either bundled or pass through, with the average price calculation. The Commission could also assist REPs and the public if it facilitated posting of the charges for customer and energy charges for each utility, updated as new tariffs are approved. A model for reporting the charges exists for utilities outside of ERCOT, where rate information is provided by the utility to rate regulation staff, who compile the information and post it monthly," TEAM said

"An alternative would be to request utilities to post this information in a standardized format on their website or at ERCOT. It is assumed the utilities would work with the Commission to post this information, though a rulemaking may ultimately be required to ensure consistency. Posting this education and rate information would benefit the public significantly, as REPs would all have a reliable information source for pricing and disclosure purposes," TEAM said

TEAM also listed concerns with the current complaint scorecard methodology on Power to Choose and proposed an alternative.

Among other things, TEAM said that the current grouping of REPs into one of five complaint score levels, regardless of the statistical significance of differences between REPs’ respective ratios, presents a skewed perspective of individual REP’s performance. The current mechanism, TEAM said, "damages REPs who might offer the most competitive prices and actually provide excellent customer service but are overlooked because customers are given the impression that a 3/5 ranking is a 'C' grade."

"REPs with a legacy customer base can absorb routine contacts without any change in stars, while REPs that have a lesser market share taking on the same risk and cost to serve customers can see their star rating plummet because of a single question which may have nothing to do with the REP’s provision of service or compliance with Commission rules," TEAM said

Furthermore, TEAM said that, "Including commercial customers in REP customer counts and complaint ranking confounds the metric. Commercial customers do not shop on the Website, experience different concerns than residential customers, and can represent different proportions of a given REP’s book of business. As the Website is primarily -- and, to the extent that products are offered, exclusively -- oriented towards residential customers, the inclusion of small commercial customers in the metrics may significantly affect an REP’s ranking in a manner that does not speak to that REP’s residential customers."

TEAM said that the current methodology, "can penalize REPs that accommodate more diverse market segments -- REPs which choose to provide more options to at-risk customers may receive more disconnection or billing-related contacts regardless of any wrongdoing or lack of customer service. This is especially true in light of market changes or new service area openings, such as Sharyland’s opening to retail competition and, shortly, Lubbock’s joining ERCOT."

TEAM also said that, "The current public complaint reporting system does not appear to distinguish between inquiries and complaints; to the extent that a customer contact may be treated as an inquiry that does not affect an REP’s star rating as opposed to a complaint which does, the decision appears to be subjective. Customers may choose to contact the Commission’s staff for any number of reasons which either cannot or should not be considered a 'complaint' against the REP. Examples include TDU charges which are passed through to the customer without markup as described on the customer’s Electricity Facts Label and over which the REP has no control, general requests regarding payment terms and deferred payment plans which the customer chose to direct to the Commission for reasons unrelated to the REP’s provision of information or service. Other times a customer may contact the Commission regarding a dispute between the customer and other parties over which the REP has no control. Where the customer did not mention the REP directly and the dispute was not directed to the REP but was instead a matter involving the TDU, ERCOT, a broker, or some other third party, the customer contact should not be registered against the REP."

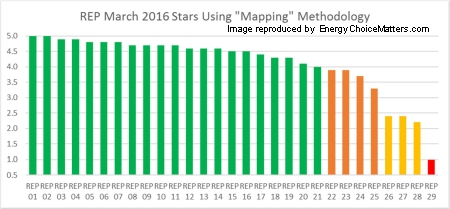

TEAM proposed a complaint "mapping" mechanism which would rank REPs from 5 stars to 1 star compared to the market mean

In an illustrative example included in its comments (using EIA data from March 2016 for REP customer count), each REP’s number of contacts ('complaints' listed on the Website for the REP) for the last six months ending March 2016, multiplied by 1,000, was divided by the REP’s estimated customer count ('contacts per thousand customers'). The resulting figures were compared statistically and mapped to scores of 1.0 to 5.0. The REP with the least contacts per thousand customers was mapped to a score of 5.0, the REP with the most contacts was mapped to 1.0, and the rest were assigned intermediate scores based on their respective contacts per thousand customers compared to the mean. A REP whose number of contacts equaled the mean for all REPs would have a score of 3.0 -- representing a true "average performance" compared to all other REPs, TEAM said

In its illustrative example, TEAM said that, of the 29 REPs, 14 (48%) scored 4.6 or above. Twenty one out of 29 scored 4.0 or above; i.e., 72% of the market scored in the top 20% of performance. Of the 8 remaining REPs, 4 scored between 3.3 and 3.9, 4 scored below 3.0, and only one scored 1.0. "Thus, when every REP’s performance is measured against mean REP performance, the majority of the market performs above average; a small number of REPs cause a disproportionate number of complaints," TEAM said

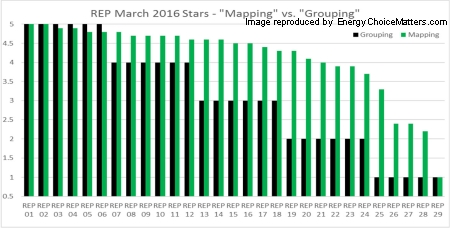

In contrast, TEAM said that the current "grouping" methodology, if used in the illustrative example, forces the six REPs representing the "middle of the pack" into the three-star quintile, which a casual observer would assume represents REPs scoring between 41% and 60% (i.e., 3 out of 5).

"In reality, those six REPs serve the market at well above the mean, with mapped scores ranging from 4.4 to 4.6. The six REPs that would be assigned to the four-star quintile have scores ranging from 4.7 to 4.8, while the six REPs in the fifth quintile have scores ranging from 4.9 to 5.0. These twelve REPs reflect mapped scores that range across 10% of the total scale (4.7 to 5.0), yet the grouping methodology would suggest that their performance ranges from 61% to 80% for 4 star REPs and 81% to 100% for 5 star REPs. The 18 REPs that would be placed in the top three quintiles -- three to five stars, an apparent scale of 41% to 100% performance -- actually place in the top 86% of rankings between the highest ranking and lowest ranking REPs," TEAM said

Charts reflecting TEAM's illustrative example are below. The first chart shows REP scores using the mapping methodology. The second chart compares these mapping scores to how REPs are scored using the current system:

The Alliance for Retail Markets separately filed topics for discussion at an upcoming workshop concerning the Power to Choose site

ARM recommended the workshop agenda include the following general topics for discussion:

1. Statutory Basis for Power to Choose Website

2. Current Scope of Website Functionalities

3. Continued Viability of Website Functionalities

4. Explanation and Clarification of Complaint Scorecard Methodology

5. Improvements in REP Posting of Offers on Website

6. Improvements in Customer Use of the Website

7. Use of Term "Power to Choose" by Private Entities/Appearance of Term in Online Searches

ARM in its comments did not offer any substantive recommendations concerning these topics

Project 49052

ADVERTISEMENT Copyright 2010-16 Energy Choice Matters. If you wish to share this story, please

email or post the website link; unauthorized copying, retransmission, or republication

prohibited.

TEAM Proposes New Complaint Scoring Mechanism, Mapping Complaints Against Market Mean

January 10, 2019

Email This Story

Copyright 2010-19 EnergyChoiceMatters.com

Reporting by Paul Ring • ring@energychoicematters.com

NEW Jobs on RetailEnergyJobs.com:

• NEW! -- Business Development Manager -- Retail Supplier -- Houston

• NEW! -- Business Development Manager

• NEW! -- Regulatory & Compliance Analyst -- Retail Supplier

• NEW! -- Sales Quality & Training Manager -- Retail Energy

• NEW! -- Sales Analyst / Senior Level -- Retail Supplier

Houston

• NEW! -- Sales Director -- Houston

• NEW! -- Director of Sales

• NEW! -- Energy Sales Representative

|

|

|

|